States and districts should exercise caution before using school climate survey data to compare schools

In recent years, the education field has recognized that successful schools feature not only strong academics, but also a clear focus on creating safe and supportive learning environments, providing access to health supports, and addressing students’ social and emotional needs. Federal mandates now reflect this more holistic understanding of educational experience. The 2015 Every Student Succeeds Act (ESSA) required states to build a measure of school quality or student success into school accountability systems. To meet this mandate, at least six states proposed using school climate survey data in their initial ESSA plans. However, states’ interest in school climate surveys is not limited to federal requirements: As of 2019, 16 states are piloting statewide climate surveys, using school climate data for accountability purposes, or simply reporting school climate data to promote public transparency. However, using school climate data to draw quality comparisons between schools requires measures that are both designed and validated to do so. Unfortunately, while many available surveys effectively measure individuals’ perceptions of their school environments within a single school, few have been validated for comparing school climate between schools.

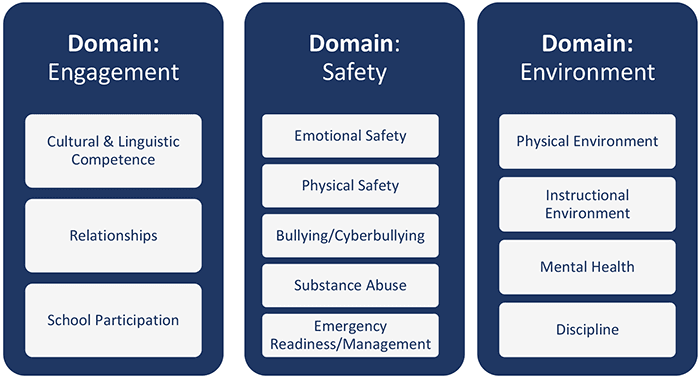

Using data from an evaluation of a school climate initiative in Washington, DC, we recently published a paper in AERA Open looking to validate the U.S. Department of Education’s (ED) School Climate Survey (EDSCLS) as both an individual- and school-level measure. EDSCLS is a freely available survey platform available for local and state education agencies, and is designed to assess school climate according to ED’s school climate framework (see image below). The framework is broken into three domains (Engagement, Safety, and Environment), with each comprised of a series of subdomains (e.g., Engagement is made up of Cultural & Linguistic Competence, Relationships, and School Participation). ED conducted an initial validation study to test whether EDSCLS could, in fact, measure each domain and subdomain within ED’s framework. The study, however, only confirmed that EDSCLS could validly measure ED’s school climate framework at the individual level—that is, whether the tool measures each individual student’s own perceptions of school climate. The study did not examine whether EDSCLS data can be aggregated for each domain and subdomain at a school level, which is necessary to make school-to-school comparisons.

Our recent analysis directly tests whether EDSCLS can be used as a school-level measure of ED’s school climate framework. Our findings indicated that this was not the case; while we found results similar to the initial validation study at the individual level, ED’s school climate framework did not adequately fit our sample’s data at the school level. We found that a more simplified model, which measured only the overarching domains of Engagement and Environment (but not their associated subdomains), produced valid between-school measurements. This was not the case, however, for the Safety domain.

These findings do not mean that schools should discontinue using EDSCLS or other school climate measures. Indeed, we found that EDSCLS is a valid and effective tool for schools to understand how their students perceive their school environments, and to target and evaluate programs, practices, and policies to improve school climate. Our findings do suggest, however, that states and districts should use caution when attempting to compare survey data between schools, and that more work is needed before such tools are used for accountability.

Related Research

- Measuring School Climate: Validating the Education Department School Climate Survey in a Sample of Urban Middle and High School Students

- Improving school climate in the District of Columbia

- Youth Bullying Prevention in the District of Columbia School Year 2017-2018 Report

- Compared to majority white schools, majority black schools are more likely to have security staff

- Some states are missing the point of ESSA’s fifth indicator

This blog was supported by Award No. 2015-CK-BX-0016, awarded by the National Institute of Justice, Office of Justice Programs, U.S. Department of Justice. The opinions, findings, and conclusions or recommendations expressed in this publication are those of the authors and do not necessarily reflect those of the U.S. Department of Justice.

© Copyright 2024 ChildTrendsPrivacy Statement

Newsletter SignupLinkedInThreadsYouTube